Claude Fast vs OpenMark AI

Side-by-side comparison to help you choose the right product.

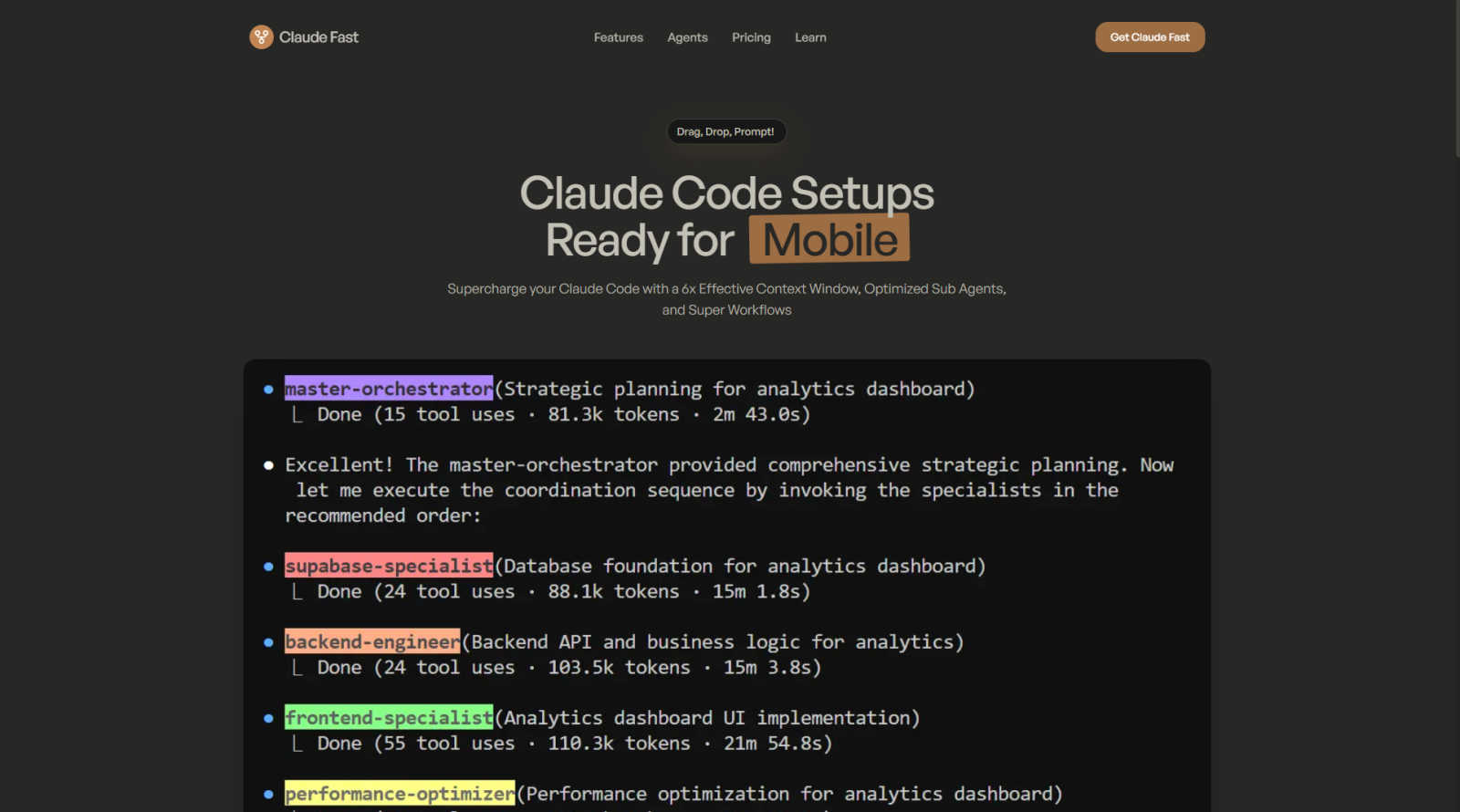

Claude Fast

Claude Fast supercharges Claude Code with smart agents and seamless workflows for efficient AI development.

Last updated: March 1, 2026

OpenMark AI benchmarks over 100 LLMs for your specific tasks, providing instant insights on cost, speed, quality, and stability without setup.

Last updated: March 26, 2026

Visual Comparison

Claude Fast

OpenMark AI

Feature Comparison

Claude Fast

Skill Activation Hook

This foundational feature ensures Claude Code never forgets its available capabilities. It automatically appends relevant skill recommendations to your prompts before Claude processes them, guaranteeing 100% adherence and context-aware skill loading. Instead of you manually reminding the AI what it can do, the system intelligently surfaces the right tools at the right time, maintaining an uninterrupted and efficient development flow.

Context Min-Maxing & Intelligent Routing

Claude Fast optimizes the precious context window through a dual-agent system. A central "orchestrator" Claude conserves its main context by delegating frequent or specialized tasks to sub-agents. These sub-agents are guided to maximize information gathering within their temporary windows. Combined with intelligent routing, simple tasks go straight to specialists, while complex ones are planned by the orchestrator, ensuring effort always matches the task's complexity.

Persistent Session Management

Say goodbye to losing your place after closing a chat. Every conversation is automatically managed as a self-writing session file. This allows you to pick up work exactly where you left off, across different devices and even across days. This persistent memory is crucial for long-term projects, eliminating the hours typically wasted on recreating context and state after every restart or interruption.

Infra Master Skill for Development & Deployments

This powerful bundled skill encapsulates expert-level infrastructure and deployment knowledge, inspired by industry leaders. It enables Claude Code to SSH into your VPS, handle setup, security, and perform deployments directly. This turns what is often a days-long research and trial-and-error process into a guided, automated procedure, effectively condensing advanced DevOps knowledge into an on-demand skill.

OpenMark AI

Task Benchmarking

OpenMark AI allows users to benchmark various AI models against specific tasks they define. This feature simplifies the evaluation process by enabling users to describe their tasks in simple terms without needing coding skills or technical jargon.

Side-by-Side Comparisons

Utilizing real API calls, OpenMark AI offers side-by-side comparisons of different models. This feature ensures users see genuine performance metrics, allowing for a more accurate assessment of each model's capabilities based on real-time data.

Detailed Performance Metrics

Users can analyze key performance indicators such as cost per request, latency, and scored quality. This feature enables teams to quantify model performance and make data-driven decisions when selecting AI solutions for their projects.

Consistency Tracking

OpenMark AI tracks the stability of model outputs across repeated runs, providing insights into how consistently a model performs over time. This feature is crucial for ensuring reliability and predictability in AI-driven applications.

Use Cases

Claude Fast

Rapid Full-Stack Prototyping

For solo founders or small teams, Claude Fast accelerates the journey from idea to live prototype. By leveraging the coordinated agent teams and the Infra Master skill, you can describe a full-stack application and have Claude handle the architecture, coding, and deployment to a live server. This use case turns weeks of work into a matter of days, enabling rapid validation of product concepts.

Maintaining Large, Complex Codebases

When working on extensive projects, Claude's standard context window becomes a bottleneck. Claude Fast's context min-maxing and session management allow developers to work on large refactors, debug intricate issues, or add features across multiple files without the AI losing the thread. The system maintains coherence over long interactions, making it ideal for enterprise-level or legacy code maintenance.

Automated Marketing & Content Creation

Using the Growth Kit, businesses can supercharge their go-to-market efforts. Claude Fast can coordinate specialists for SEO research, copywriting, and content structuring based on proven marketing frameworks. This transforms the AI into a full-scale marketing team, capable of producing targeted, conversion-optimized copy and content strategy without constant human direction and revision.

Streamlined DevOps & Continuous Deployment

The Infra Master skill directly addresses the pain point of deployment. Developers can instruct Claude to set up production environments, configure security (firewalls, SSL), and establish CI/CD pipelines. This use case is perfect for developers who are strong on application logic but want an expert guide for server management, reducing deployment from a risky weekend project to a routine, automated task.

OpenMark AI

Model Selection for Development

OpenMark AI is ideal for development teams looking to select the most suitable AI model for their applications. By benchmarking against specific tasks, teams can identify which models perform best under their unique requirements.

Cost Analysis for AI Implementations

Product managers can use OpenMark AI to conduct thorough cost analyses of different models. This helps them understand the financial implications of using various AI technologies and select options that offer the best balance of performance and cost.

Quality Assurance Testing

Quality assurance teams can leverage OpenMark AI to validate the outputs of chosen models. By running multiple tests and comparing results, they can ensure that the models consistently meet quality standards before deployment.

Research and Development Initiatives

Researchers exploring advanced AI capabilities can utilize OpenMark AI to benchmark emerging models. This enables them to assess new technologies' effectiveness and stability, supporting innovation and informed decision-making in AI research.

Overview

About Claude Fast

Claude Fast is an AI development framework specifically engineered to supercharge your productivity with Claude Code. It solves the fundamental frustrations developers face when scaling AI-assisted workflows: forgotten context, manual agent coordination, and inefficient session management. By implementing a sophisticated system of 11 coordinated AI specialists, Claude Fast automates task routing and planning, effectively multiplying your usable context window by 6x. This allows you to tackle complex, multi-step projects—from full-stack web apps to backend systems—without the cognitive overhead of micromanaging the AI.

The framework is built on Anthropic's official best practices and is designed for solo entrepreneurs, startup teams, and developers who need to ship products faster. Its core value lies in transforming Claude Code from a conversational assistant into a predictable, persistent, and powerful development partner. With features like self-writing session files, intelligent skill activation, and a built-in infrastructure mastery skill for deployment, Claude Fast removes the guesswork and manual setup. You simply drag, drop, and prompt, shifting your focus from configuring AI to actually building and growing your business.

About OpenMark AI

OpenMark AI is an innovative web application designed specifically for task-level benchmarking of large language models (LLMs). It allows users to articulate their testing requirements in plain language, making the evaluation process accessible to those without extensive technical expertise. By enabling simultaneous testing of prompts across various models, OpenMark AI provides users with comprehensive insights into cost per request, latency, scored quality, and stability across multiple runs. This functionality is essential for developers and product teams who need to select or validate the most appropriate model before integrating AI features into their products. With hosted benchmarking that uses credits, users are relieved from the hassle of managing different API keys for OpenAI, Anthropic, or Google, streamlining the comparison process. OpenMark AI emphasizes real-world performance, showcasing actual API call results rather than relying on potentially misleading marketing metrics. This focus on cost efficiency allows users to make informed choices based on the quality of outputs relative to their expenses, ensuring they select the most effective model for their specific workflows. Free and paid plans are available, with detailed information provided in the in-app billing section.

Frequently Asked Questions

Claude Fast FAQ

How do I install and set up Claude Fast?

There is no traditional installation. Claude Fast is designed as a "drop and prompt" framework. You simply download the Code Kit (a folder of skill files) and place it into your Claude Code workspace directory. Once loaded, you run a simple initialization command, and the system is ready. The entire setup is designed to be completed in under 30 seconds.

Is Claude Fast an official Anthropic product?

No, Claude Fast is not an official product of Anthropic. It is a third-party framework built meticulously by developers based on Anthropic's published recommendations, resources, and best practices for Claude Code. It is designed to be fully compatible and is constantly updated to align with new Claude Code releases and features like Agent Teams.

What is the difference between the Code Kit and the Growth Kit?

The Code Kit is focused on software development, containing skills for building web, mobile, backend, and DevOps projects. The Growth Kit is tailored for business growth activities, containing skills for sales, marketing, and research tasks like copywriting and SEO. The Complete Kit includes both, providing an end-to-end toolkit from product development to market launch.

How does the "Self-Improvement Loop" feature work?

As you use Claude Fast, the system actively prompts you to capture successful patterns, fixes, and new learnings. These insights can be formalized into new skill files or updates to existing ones. This means the framework doesn't just help you build your project; it also evolves and improves its own capabilities based on your real-world usage, becoming more effective over time.

OpenMark AI FAQ

How does OpenMark AI simplify the benchmarking process?

OpenMark AI simplifies benchmarking by allowing users to describe their tasks in plain language, eliminating the need for complex coding or technical setups. This makes it accessible for users of all skill levels.

What types of models can I benchmark using OpenMark AI?

OpenMark AI supports benchmarking a wide array of models from various providers, including OpenAI, Anthropic, and Google. This extensive catalog allows users to test over 100 models against their specific tasks.

Is OpenMark AI suitable for non-technical users?

Yes, OpenMark AI is designed to be user-friendly, enabling individuals without technical backgrounds to effectively benchmark AI models. The intuitive interface and plain language task descriptions facilitate ease of use.

Can I track performance consistency with OpenMark AI?

Absolutely. OpenMark AI offers features that track the consistency of model outputs across multiple runs, providing insights into how reliably a model performs over time, which is critical for applications requiring stable results.

Alternatives

Claude Fast Alternatives

Claude Fast is an AI development framework designed to enhance the Claude Code experience. It falls into the category of AI assistants and agent orchestration platforms, focusing on streamlining coding workflows through intelligent task management and context optimization. Users often explore alternatives for various reasons. These can include budget constraints, specific feature requirements not met by the current tool, or the need for compatibility with different AI models and development environments. The search for the right fit is a normal part of evaluating any software solution. When choosing an alternative, consider your core needs. Key factors often include the platform's ability to manage context efficiently, its integration capabilities with your existing tools, the flexibility of its agent system, and overall cost-effectiveness for your team's scale and projects.

OpenMark AI Alternatives

OpenMark AI is a web-based application designed for benchmarking various large language models (LLMs) based on specific tasks. It allows developers and product teams to evaluate models by comparing metrics such as cost, speed, quality, and stability, making it easier to make informed decisions before deploying AI features. Users often seek alternatives to OpenMark AI for reasons such as pricing variations, specific feature sets, or integration capabilities that better meet their platform needs. When choosing an alternative, it is essential to consider factors such as the range of supported models, the accuracy and reliability of benchmarking results, ease of use, and any associated costs. Look for solutions that provide comprehensive insights into model performance and cost efficiency, ensuring they align with your development goals and workflow requirements.