HookMesh vs OpenMark AI

Side-by-side comparison to help you choose the right product.

Effortlessly enhance your SaaS with reliable webhook delivery, automatic retries, and a self-service customer portal.

Last updated: February 26, 2026

OpenMark AI benchmarks over 100 LLMs for your specific tasks, providing instant insights on cost, speed, quality, and stability without setup.

Last updated: March 26, 2026

Visual Comparison

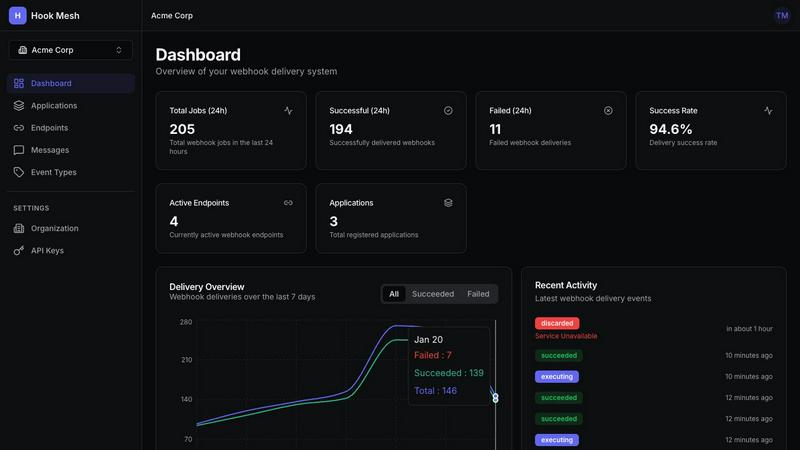

HookMesh

OpenMark AI

Feature Comparison

HookMesh

Reliable Delivery

HookMesh guarantees that webhooks are never lost. Its automatic retry mechanism employs exponential backoff with jitter, ensuring that delivery attempts are spaced out intelligently. This system continues for up to 48 hours, significantly increasing the chances of successful delivery and maintaining a seamless experience for end-users.

Circuit Breaker

The circuit breaker feature automatically disables failing endpoints to prevent a single slow or down endpoint from backing up the entire queue. Once the endpoint recovers, it is automatically re-enabled, thus maintaining operational efficiency and reducing downtime for webhook deliveries.

Customer Portal

The self-service customer portal allows users to manage their webhook endpoints effortlessly. It features an embeddable UI that enables customers to add or modify endpoints, view delivery logs for full request/response visibility, and instantly replay any failed webhook deliveries with a single click.

Developer Experience

Designed for simplicity, HookMesh provides a robust REST API and official SDKs for JavaScript, Python, and Go. This allows developers to integrate webhook functionality into their applications in just a few lines of code, streamlining the process of sending events and focusing on building great products.

OpenMark AI

Task Benchmarking

OpenMark AI allows users to benchmark various AI models against specific tasks they define. This feature simplifies the evaluation process by enabling users to describe their tasks in simple terms without needing coding skills or technical jargon.

Side-by-Side Comparisons

Utilizing real API calls, OpenMark AI offers side-by-side comparisons of different models. This feature ensures users see genuine performance metrics, allowing for a more accurate assessment of each model's capabilities based on real-time data.

Detailed Performance Metrics

Users can analyze key performance indicators such as cost per request, latency, and scored quality. This feature enables teams to quantify model performance and make data-driven decisions when selecting AI solutions for their projects.

Consistency Tracking

OpenMark AI tracks the stability of model outputs across repeated runs, providing insights into how consistently a model performs over time. This feature is crucial for ensuring reliability and predictability in AI-driven applications.

Use Cases

HookMesh

E-commerce Order Notifications

E-commerce platforms can utilize HookMesh to send real-time order notifications to customers. By ensuring that order status updates are delivered reliably, businesses can enhance customer satisfaction and reduce inquiries about order status.

Payment Processing

Payment gateways can leverage HookMesh to handle webhook notifications for transaction events, such as payment confirmations or refunds. Reliable delivery of these notifications is crucial for maintaining accurate records and ensuring user trust.

CRM Updates

Customer Relationship Management (CRM) systems can use HookMesh to deliver updates to various integrated applications. Whether it’s notifying partners about new leads or updating user records, HookMesh ensures that critical data is consistently delivered.

Event-Driven Applications

For applications that rely on event-driven architectures, HookMesh provides a robust solution for sending and receiving webhook events. This capability allows organizations to respond instantly to user actions or system events, improving responsiveness and user engagement.

OpenMark AI

Model Selection for Development

OpenMark AI is ideal for development teams looking to select the most suitable AI model for their applications. By benchmarking against specific tasks, teams can identify which models perform best under their unique requirements.

Cost Analysis for AI Implementations

Product managers can use OpenMark AI to conduct thorough cost analyses of different models. This helps them understand the financial implications of using various AI technologies and select options that offer the best balance of performance and cost.

Quality Assurance Testing

Quality assurance teams can leverage OpenMark AI to validate the outputs of chosen models. By running multiple tests and comparing results, they can ensure that the models consistently meet quality standards before deployment.

Research and Development Initiatives

Researchers exploring advanced AI capabilities can utilize OpenMark AI to benchmark emerging models. This enables them to assess new technologies' effectiveness and stability, supporting innovation and informed decision-making in AI research.

Overview

About HookMesh

HookMesh is a powerful solution designed to streamline webhook delivery for modern Software as a Service (SaaS) products. It tackles the inherent complexities associated with creating and managing webhooks in-house, including challenges like retry logic, circuit breakers, and debugging delivery issues. With HookMesh, organizations can redirect their focus to developing their core offerings rather than getting mired in the technical intricacies of webhook management. The platform boasts a robust, battle-tested infrastructure that guarantees reliable delivery through features like automatic retries, exponential backoff, and idempotency keys. Targeted at developers and product teams, HookMesh enhances customer experience by ensuring that webhook events are delivered consistently and reliably. The self-service customer portal not only facilitates easy endpoint management and visibility but also enables users to replay failed webhooks with just one click. With HookMesh, organizations can achieve peace of mind in their webhook strategy and ensure that their services remain reliable and efficient.

About OpenMark AI

OpenMark AI is an innovative web application designed specifically for task-level benchmarking of large language models (LLMs). It allows users to articulate their testing requirements in plain language, making the evaluation process accessible to those without extensive technical expertise. By enabling simultaneous testing of prompts across various models, OpenMark AI provides users with comprehensive insights into cost per request, latency, scored quality, and stability across multiple runs. This functionality is essential for developers and product teams who need to select or validate the most appropriate model before integrating AI features into their products. With hosted benchmarking that uses credits, users are relieved from the hassle of managing different API keys for OpenAI, Anthropic, or Google, streamlining the comparison process. OpenMark AI emphasizes real-world performance, showcasing actual API call results rather than relying on potentially misleading marketing metrics. This focus on cost efficiency allows users to make informed choices based on the quality of outputs relative to their expenses, ensuring they select the most effective model for their specific workflows. Free and paid plans are available, with detailed information provided in the in-app billing section.

Frequently Asked Questions

HookMesh FAQ

How does HookMesh ensure reliable webhook delivery?

HookMesh employs automatic retries with exponential backoff and circuit breakers to manage delivery attempts. This approach ensures that webhooks are retried intelligently over a 48-hour window, minimizing the risk of lost notifications.

What types of applications can benefit from using HookMesh?

HookMesh is ideal for any application that relies on webhook notifications, such as e-commerce platforms, payment processors, CRM systems, and event-driven applications. It streamlines webhook management and enhances reliability across various use cases.

Is there a free trial available for HookMesh?

Yes, HookMesh offers a free tier that includes 5,000 webhooks per month without requiring a credit card. This allows businesses to explore the platform's capabilities before committing to a paid plan.

How can my customers manage their webhooks?

Customers can utilize the self-service portal provided by HookMesh, which features tools for endpoint management, delivery logs, and the ability to replay failed webhooks with one click, ensuring they have full control over their webhook strategy.

OpenMark AI FAQ

How does OpenMark AI simplify the benchmarking process?

OpenMark AI simplifies benchmarking by allowing users to describe their tasks in plain language, eliminating the need for complex coding or technical setups. This makes it accessible for users of all skill levels.

What types of models can I benchmark using OpenMark AI?

OpenMark AI supports benchmarking a wide array of models from various providers, including OpenAI, Anthropic, and Google. This extensive catalog allows users to test over 100 models against their specific tasks.

Is OpenMark AI suitable for non-technical users?

Yes, OpenMark AI is designed to be user-friendly, enabling individuals without technical backgrounds to effectively benchmark AI models. The intuitive interface and plain language task descriptions facilitate ease of use.

Can I track performance consistency with OpenMark AI?

Absolutely. OpenMark AI offers features that track the consistency of model outputs across multiple runs, providing insights into how reliably a model performs over time, which is critical for applications requiring stable results.

Alternatives

HookMesh Alternatives

HookMesh is a robust platform designed to streamline webhook delivery for Software as a Service (SaaS) products. By addressing the challenges associated with in-house webhook management, such as retry logic and debugging, it allows businesses to focus on their core functionalities without the hassle of technical complexities. Users often seek alternatives to HookMesh for various reasons, including pricing constraints, specific feature requirements, or the need for compatibility with different platforms. When searching for alternatives, it's essential to consider factors such as reliability of delivery, user experience, and the ability to manage webhooks effectively through a self-service interface. Finding the right solution can significantly impact the efficiency of webhook operations. Look for alternatives that offer features like automatic retries, idempotency, and comprehensive logging to ensure you maintain control over your webhook processes. Additionally, evaluating the ease of integration and support options can help you choose a platform that aligns well with your business needs while enhancing your overall webhook strategy.

OpenMark AI Alternatives

OpenMark AI is a web-based application designed for benchmarking various large language models (LLMs) based on specific tasks. It allows developers and product teams to evaluate models by comparing metrics such as cost, speed, quality, and stability, making it easier to make informed decisions before deploying AI features. Users often seek alternatives to OpenMark AI for reasons such as pricing variations, specific feature sets, or integration capabilities that better meet their platform needs. When choosing an alternative, it is essential to consider factors such as the range of supported models, the accuracy and reliability of benchmarking results, ease of use, and any associated costs. Look for solutions that provide comprehensive insights into model performance and cost efficiency, ensuring they align with your development goals and workflow requirements.