diffray vs OpenMark AI

Side-by-side comparison to help you choose the right product.

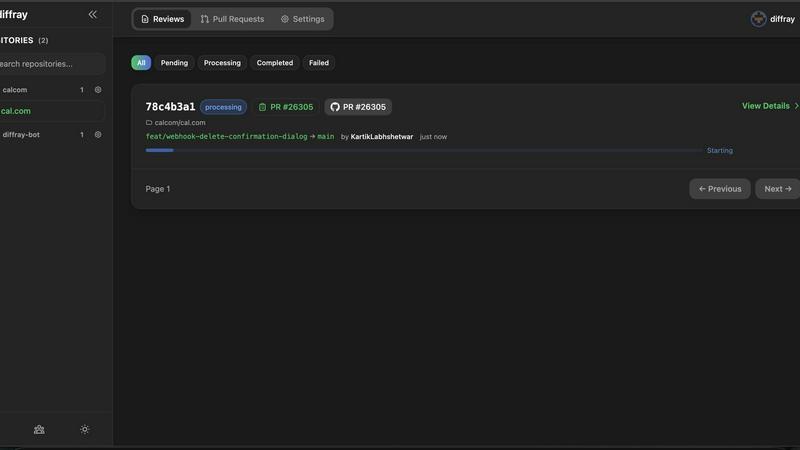

diffray

Diffray's AI code review detects real bugs while reducing false positives by 87% for more efficient software.

Last updated: February 28, 2026

OpenMark AI benchmarks over 100 LLMs for your specific tasks, providing instant insights on cost, speed, quality, and stability without setup.

Last updated: March 26, 2026

Visual Comparison

diffray

OpenMark AI

Feature Comparison

diffray

Specialized AI Agents

diffray employs a unique fleet of over 30 specialized AI agents, each tailored to address specific aspects of code quality, including security vulnerabilities, performance optimization, bug detection, and adherence to best practices. This multi-agent strategy ensures that reviews are context-aware and highly relevant, leading to more actionable feedback.

Context-Aware Feedback

Unlike traditional tools that may provide generic comments, diffray delivers clean, context-aware suggestions that integrate seamlessly with your codebase. This targeted feedback reduces noise and helps developers understand the implications of their changes, making the review process more efficient and informative.

Seamless GitHub Integration

diffray offers easy integration with GitHub, allowing teams to incorporate the platform into their existing workflows without disruptions. This integration enables automatic feedback on pull requests, facilitating a smoother code review process that aligns with your team's development practices.

Educational Insights

Beyond just identifying issues, diffray aims to educate developers by providing detailed explanations and best practices alongside its feedback. This empowers teams to learn from each review, fostering a culture of continuous improvement and skill enhancement within the development environment.

OpenMark AI

Task Benchmarking

OpenMark AI allows users to benchmark various AI models against specific tasks they define. This feature simplifies the evaluation process by enabling users to describe their tasks in simple terms without needing coding skills or technical jargon.

Side-by-Side Comparisons

Utilizing real API calls, OpenMark AI offers side-by-side comparisons of different models. This feature ensures users see genuine performance metrics, allowing for a more accurate assessment of each model's capabilities based on real-time data.

Detailed Performance Metrics

Users can analyze key performance indicators such as cost per request, latency, and scored quality. This feature enables teams to quantify model performance and make data-driven decisions when selecting AI solutions for their projects.

Consistency Tracking

OpenMark AI tracks the stability of model outputs across repeated runs, providing insights into how consistently a model performs over time. This feature is crucial for ensuring reliability and predictability in AI-driven applications.

Use Cases

diffray

Streamlined Code Reviews

Development teams can leverage diffray to streamline their code review process, reducing the time spent sifting through irrelevant suggestions. By providing targeted feedback from specialized agents, diffray ensures that developers focus on critical issues that matter the most.

Improved Code Quality

With its ability to detect real, critical issues, diffray helps teams significantly improve overall code quality. By addressing security vulnerabilities and performance bottlenecks early in the development cycle, diffray aids in delivering more robust and secure applications.

Enhanced Team Collaboration

diffray fosters better collaboration among team members by providing a shared understanding of code quality standards. As developers receive constructive feedback that is easy to interpret, it encourages more effective discussions during code reviews, leading to collective problem-solving.

Accelerated Development Cycles

By transforming code reviews from a time-consuming chore into a streamlined process, diffray accelerates development cycles. Teams can iterate faster, address issues promptly, and maintain a steady pace of innovation without sacrificing code quality.

OpenMark AI

Model Selection for Development

OpenMark AI is ideal for development teams looking to select the most suitable AI model for their applications. By benchmarking against specific tasks, teams can identify which models perform best under their unique requirements.

Cost Analysis for AI Implementations

Product managers can use OpenMark AI to conduct thorough cost analyses of different models. This helps them understand the financial implications of using various AI technologies and select options that offer the best balance of performance and cost.

Quality Assurance Testing

Quality assurance teams can leverage OpenMark AI to validate the outputs of chosen models. By running multiple tests and comparing results, they can ensure that the models consistently meet quality standards before deployment.

Research and Development Initiatives

Researchers exploring advanced AI capabilities can utilize OpenMark AI to benchmark emerging models. This enables them to assess new technologies' effectiveness and stability, supporting innovation and informed decision-making in AI research.

Overview

About diffray

diffray is an innovative multi-agent AI code review platform specifically designed to enhance the code review process for development teams. By utilizing a diverse fleet of over 30 specialized AI agents, each excelling in distinct areas such as security, performance, bug detection, best practices, and SEO, diffray transcends the limitations of traditional code review tools that depend on a singular, generic AI model. This tailored approach enables diffray to comprehend the complete context of your codebase rather than merely analyzing isolated code diffs. The platform's main value proposition lies in its ability to significantly reduce false positives while uncovering critical issues that can impact your project's quality and security. By transforming code reviews into a streamlined and educational experience, diffray not only saves time but also empowers developers and engineering leaders to focus on meaningful improvements that elevate code quality and foster rapid development.

About OpenMark AI

OpenMark AI is an innovative web application designed specifically for task-level benchmarking of large language models (LLMs). It allows users to articulate their testing requirements in plain language, making the evaluation process accessible to those without extensive technical expertise. By enabling simultaneous testing of prompts across various models, OpenMark AI provides users with comprehensive insights into cost per request, latency, scored quality, and stability across multiple runs. This functionality is essential for developers and product teams who need to select or validate the most appropriate model before integrating AI features into their products. With hosted benchmarking that uses credits, users are relieved from the hassle of managing different API keys for OpenAI, Anthropic, or Google, streamlining the comparison process. OpenMark AI emphasizes real-world performance, showcasing actual API call results rather than relying on potentially misleading marketing metrics. This focus on cost efficiency allows users to make informed choices based on the quality of outputs relative to their expenses, ensuring they select the most effective model for their specific workflows. Free and paid plans are available, with detailed information provided in the in-app billing section.

Frequently Asked Questions

diffray FAQ

How does diffray differ from traditional code review tools?

diffray sets itself apart by utilizing a fleet of over 30 specialized AI agents, each focusing on specific aspects of code quality, rather than relying on a single generic model. This allows for more accurate and context-aware feedback.

Is diffray easy to integrate with existing workflows?

Yes, diffray is designed for seamless integration with GitHub, making it simple to incorporate into your existing development workflows. The setup process is straightforward, ensuring minimal disruption to your team's operations.

What types of issues can diffray identify?

diffray is capable of identifying a wide range of issues, including security vulnerabilities, performance inefficiencies, bugs, and violations of best practices. Its multi-agent approach ensures comprehensive coverage of various code quality aspects.

Can diffray help with team training and knowledge sharing?

Absolutely. diffray not only highlights issues but also provides educational insights and best practices. This feature promotes knowledge sharing and skill development within teams, making it an invaluable tool for continuous learning.

OpenMark AI FAQ

How does OpenMark AI simplify the benchmarking process?

OpenMark AI simplifies benchmarking by allowing users to describe their tasks in plain language, eliminating the need for complex coding or technical setups. This makes it accessible for users of all skill levels.

What types of models can I benchmark using OpenMark AI?

OpenMark AI supports benchmarking a wide array of models from various providers, including OpenAI, Anthropic, and Google. This extensive catalog allows users to test over 100 models against their specific tasks.

Is OpenMark AI suitable for non-technical users?

Yes, OpenMark AI is designed to be user-friendly, enabling individuals without technical backgrounds to effectively benchmark AI models. The intuitive interface and plain language task descriptions facilitate ease of use.

Can I track performance consistency with OpenMark AI?

Absolutely. OpenMark AI offers features that track the consistency of model outputs across multiple runs, providing insights into how reliably a model performs over time, which is critical for applications requiring stable results.

Alternatives

diffray Alternatives

Diffray is a multi-agent AI code review platform that enhances the coding process by providing intelligent feedback on pull requests. It belongs to the development category and is designed to help teams identify bugs and improve code quality with fewer false positives. Users often seek alternatives due to factors such as pricing, feature sets, or specific platform integration needs. When looking for an alternative, consider the depth of analysis, context awareness, user experience, and how well the tool aligns with your team's workflow and coding standards.

OpenMark AI Alternatives

OpenMark AI is a web-based application designed for benchmarking various large language models (LLMs) based on specific tasks. It allows developers and product teams to evaluate models by comparing metrics such as cost, speed, quality, and stability, making it easier to make informed decisions before deploying AI features. Users often seek alternatives to OpenMark AI for reasons such as pricing variations, specific feature sets, or integration capabilities that better meet their platform needs. When choosing an alternative, it is essential to consider factors such as the range of supported models, the accuracy and reliability of benchmarking results, ease of use, and any associated costs. Look for solutions that provide comprehensive insights into model performance and cost efficiency, ensuring they align with your development goals and workflow requirements.