OpenMark AI vs qtrl.ai

Side-by-side comparison to help you choose the right product.

OpenMark AI benchmarks over 100 LLMs for your specific tasks, providing instant insights on cost, speed, quality, and stability without setup.

Last updated: March 26, 2026

qtrl.ai

qtrl.ai helps QA teams scale testing with AI agents while maintaining full control and governance.

Last updated: March 4, 2026

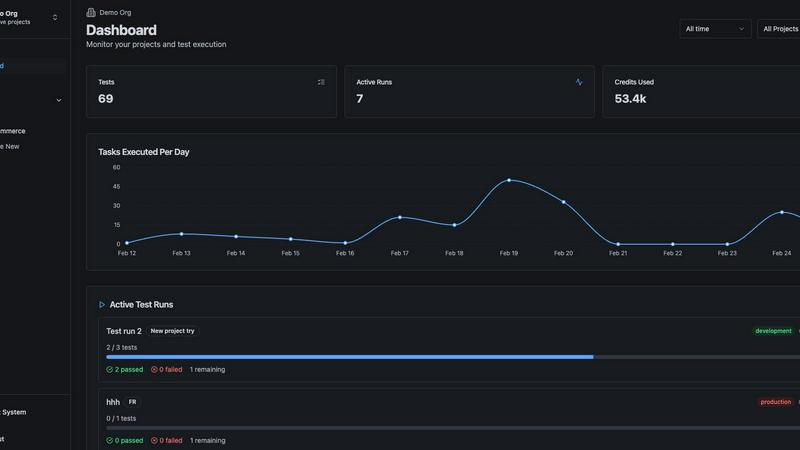

Visual Comparison

OpenMark AI

qtrl.ai

Feature Comparison

OpenMark AI

Task Benchmarking

OpenMark AI allows users to benchmark various AI models against specific tasks they define. This feature simplifies the evaluation process by enabling users to describe their tasks in simple terms without needing coding skills or technical jargon.

Side-by-Side Comparisons

Utilizing real API calls, OpenMark AI offers side-by-side comparisons of different models. This feature ensures users see genuine performance metrics, allowing for a more accurate assessment of each model's capabilities based on real-time data.

Detailed Performance Metrics

Users can analyze key performance indicators such as cost per request, latency, and scored quality. This feature enables teams to quantify model performance and make data-driven decisions when selecting AI solutions for their projects.

Consistency Tracking

OpenMark AI tracks the stability of model outputs across repeated runs, providing insights into how consistently a model performs over time. This feature is crucial for ensuring reliability and predictability in AI-driven applications.

qtrl.ai

Enterprise-Grade Test Management

qtrl provides a centralized command center for all QA activities. Teams can create and organize test cases, build detailed test plans, execute manual and automated test runs, and establish clear traceability from requirements to test coverage. Real-time dashboards offer instant visibility into quality metrics, pass/fail rates, and potential risk areas, making it ideal for managers and leads who need audit trails and compliance-ready reporting without sacrificing agility.

Progressive AI & Autonomous Agents

This feature introduces intelligent automation at your team's pace. Start by writing simple, high-level test instructions in plain English for the AI agent to execute. As trust builds, leverage agents to automatically generate full UI test scripts from descriptions, maintain them through application changes, and run them at scale across multiple browsers and environments. All AI suggestions are fully reviewable and approvable, ensuring humans remain in the loop and governance is never compromised.

Adaptive Memory & Context Awareness

qtrl's AI builds a living, evolving knowledge base of your application. It learns from every interaction—including exploratory testing, test execution results, and logged issues. This accumulated context powers smarter, more accurate test generation over time. The system becomes more effective and aware of your specific application's behavior and coverage gaps, proactively suggesting new tests to improve overall quality.

Multi-Environment Execution with Governance

Execute tests securely across any environment—development, staging, or production. qtrl supports per-environment configuration variables and encrypted secrets, ensuring sensitive data like API keys or passwords are never exposed to the AI agents. You define default environments and rules, giving agents permissioned autonomy to operate at scale while maintaining stringent security and operational controls.

Use Cases

OpenMark AI

Model Selection for Development

OpenMark AI is ideal for development teams looking to select the most suitable AI model for their applications. By benchmarking against specific tasks, teams can identify which models perform best under their unique requirements.

Cost Analysis for AI Implementations

Product managers can use OpenMark AI to conduct thorough cost analyses of different models. This helps them understand the financial implications of using various AI technologies and select options that offer the best balance of performance and cost.

Quality Assurance Testing

Quality assurance teams can leverage OpenMark AI to validate the outputs of chosen models. By running multiple tests and comparing results, they can ensure that the models consistently meet quality standards before deployment.

Research and Development Initiatives

Researchers exploring advanced AI capabilities can utilize OpenMark AI to benchmark emerging models. This enables them to assess new technologies' effectiveness and stability, supporting innovation and informed decision-making in AI research.

qtrl.ai

Scaling Beyond Manual Testing

QA teams overwhelmed by repetitive manual test cycles can use qtrl to systematically introduce automation. They begin by structuring their existing manual cases in the platform, then progressively offload execution to AI agents. This allows the team to scale test coverage and frequency without linearly increasing headcount, freeing human testers to focus on complex, exploratory, and high-value testing activities.

Modernizing Legacy QA Workflows

Companies relying on outdated, siloed, or script-heavy automation frameworks can modernize without a risky "big bang" replacement. qtrl integrates with existing tools and processes, allowing teams to gradually transition. They can maintain current scripts while using qtrl's AI to generate new, more maintainable tests and centralize all management and reporting, reducing technical debt and brittleness over time.

Ensuring Governance in Enterprise AI Adoption

Enterprises that require strict compliance, audit trails, and control can safely adopt AI for QA. qtrl's permissioned autonomy, full visibility into agent actions, and review gates ensure that all automation is transparent and accountable. This allows large organizations to gain the speed benefits of AI while meeting internal security, regulatory, and governance mandates without relying on unpredictable "black-box" solutions.

Accelerating Product-Led Engineering Teams

Fast-moving product and engineering teams that need rapid quality feedback can integrate qtrl into their CI/CD pipelines. Developers and product managers can write high-level test instructions for features, and qtrl's agents can automatically generate and run the corresponding tests. This creates continuous quality feedback loops, catches regressions early, and helps maintain high release velocity without creating a testing bottleneck.

Overview

About OpenMark AI

OpenMark AI is an innovative web application designed specifically for task-level benchmarking of large language models (LLMs). It allows users to articulate their testing requirements in plain language, making the evaluation process accessible to those without extensive technical expertise. By enabling simultaneous testing of prompts across various models, OpenMark AI provides users with comprehensive insights into cost per request, latency, scored quality, and stability across multiple runs. This functionality is essential for developers and product teams who need to select or validate the most appropriate model before integrating AI features into their products. With hosted benchmarking that uses credits, users are relieved from the hassle of managing different API keys for OpenAI, Anthropic, or Google, streamlining the comparison process. OpenMark AI emphasizes real-world performance, showcasing actual API call results rather than relying on potentially misleading marketing metrics. This focus on cost efficiency allows users to make informed choices based on the quality of outputs relative to their expenses, ensuring they select the most effective model for their specific workflows. Free and paid plans are available, with detailed information provided in the in-app billing section.

About qtrl.ai

qtrl.ai is a modern, AI-powered QA platform designed to solve the fundamental scaling challenges faced by software development teams. It bridges the gap between slow, manual testing processes and the brittle, expensive complexity of traditional test automation. qtrl provides a unified solution that combines enterprise-grade test management with intelligent, trustworthy AI automation. This allows teams to organize test cases, plan runs, trace requirements, and track quality metrics from a single centralized hub, ensuring full visibility and governance. The platform's progressive approach to AI is its key differentiator; instead of forcing a risky, fully autonomous model, it allows teams to start with structured manual management and gradually introduce AI agents that can generate, maintain, and execute UI tests from plain English. Built for product-led engineering teams, QA groups moving beyond manual testing, and enterprises requiring strict compliance, qtrl offers a controlled, step-by-step path to faster, more intelligent, and scalable quality assurance without sacrificing oversight or trust.

Frequently Asked Questions

OpenMark AI FAQ

How does OpenMark AI simplify the benchmarking process?

OpenMark AI simplifies benchmarking by allowing users to describe their tasks in plain language, eliminating the need for complex coding or technical setups. This makes it accessible for users of all skill levels.

What types of models can I benchmark using OpenMark AI?

OpenMark AI supports benchmarking a wide array of models from various providers, including OpenAI, Anthropic, and Google. This extensive catalog allows users to test over 100 models against their specific tasks.

Is OpenMark AI suitable for non-technical users?

Yes, OpenMark AI is designed to be user-friendly, enabling individuals without technical backgrounds to effectively benchmark AI models. The intuitive interface and plain language task descriptions facilitate ease of use.

Can I track performance consistency with OpenMark AI?

Absolutely. OpenMark AI offers features that track the consistency of model outputs across multiple runs, providing insights into how reliably a model performs over time, which is critical for applications requiring stable results.

qtrl.ai FAQ

How does qtrl.ai ensure the AI doesn't make unpredictable changes?

qtrl is built on a principle of "permissioned autonomy." The AI does not make changes or execute tests without review and approval, unless explicitly configured to do so within strict rules you define. All AI-generated test scripts and modifications are presented for human review first. You maintain full visibility into every action the agent takes, ensuring there are no "black-box" decisions and you remain in complete control.

Can qtrl.ai work with our existing tools and CI/CD pipeline?

Yes, qtrl is designed to integrate into real-world workflows. It offers requirements management integration, supports connections to CI/CD pipelines for automated test execution as part of your build process, and provides APIs to work with your existing toolchain. It acts as a centralized QA layer that complements your current development ecosystem rather than forcing a complete replacement.

Is qtrl suitable for teams with no prior test automation experience?

Absolutely. qtrl's progressive approach is ideal for teams starting their automation journey. You can begin by using it purely as a test management tool. When ready, you can start with simple, English-language instructions for the AI to execute, which requires no coding. This allows teams to build automation expertise and trust in the platform gradually, at their own pace.

How does qtrl handle testing across different environments and with sensitive data?

qtrl provides robust multi-environment execution capabilities. You can define different environments (dev, staging, prod) with their own variables. Crucially, sensitive data like passwords or API keys can be stored as encrypted secrets that are injected at runtime. The AI agents never have direct access to these raw secrets, ensuring security and compliance when testing across various stages of your deployment pipeline.

Alternatives

OpenMark AI Alternatives

OpenMark AI is a web-based application designed for benchmarking various large language models (LLMs) based on specific tasks. It allows developers and product teams to evaluate models by comparing metrics such as cost, speed, quality, and stability, making it easier to make informed decisions before deploying AI features. Users often seek alternatives to OpenMark AI for reasons such as pricing variations, specific feature sets, or integration capabilities that better meet their platform needs. When choosing an alternative, it is essential to consider factors such as the range of supported models, the accuracy and reliability of benchmarking results, ease of use, and any associated costs. Look for solutions that provide comprehensive insights into model performance and cost efficiency, ensuring they align with your development goals and workflow requirements.

qtrl.ai Alternatives

qtrl.ai is a modern QA platform in the automation and dev tools category. It helps software teams scale their testing efforts by combining enterprise-grade test management with trustworthy AI automation, allowing for a gradual and controlled adoption of intelligent agents. Users often explore alternatives for various reasons. These can include budget constraints, the need for different feature sets, specific platform integrations, or simply evaluating the market to ensure a tool aligns perfectly with their team's size, tech stack, and workflow maturity. When considering other options, focus on your core needs. Look for a solution that balances robust test management with the level of automation you require. Key evaluation points should include governance controls, the transparency of any AI features, ease of integration into your development lifecycle, and the overall scalability to support your team's growth without introducing unnecessary complexity.