Agenta vs OpenMark AI

Side-by-side comparison to help you choose the right product.

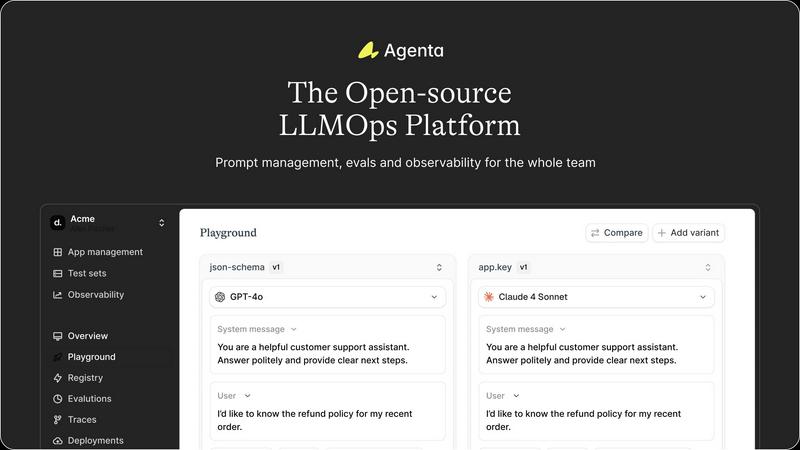

Agenta is an open-source LLMOps platform that centralizes collaboration, evaluation, and observability for reliable AI.

Last updated: March 1, 2026

OpenMark AI benchmarks over 100 LLMs for your specific tasks, providing instant insights on cost, speed, quality, and stability without setup.

Last updated: March 26, 2026

Visual Comparison

Agenta

OpenMark AI

Feature Comparison

Agenta

Centralized Workflow Management

Agenta centralizes all aspects of LLM development, including prompt management, evaluations, and system traces, into one platform. This eliminates the chaos of scattered tools and enables teams to maintain a cohesive workflow.

Unified Playground

With Agenta’s unified playground, teams can experiment with different prompts and models side-by-side. This feature allows for easy comparisons and iterative development, facilitating faster and more informed decision-making.

Automated Evaluation System

The platform includes an automated evaluation system that enables teams to systematically run experiments and track results. This evidence-based approach replaces guesswork with data-driven insights, ensuring that every change is validated before deployment.

Integrated Observability Tools

Agenta provides integrated observability tools that allow teams to trace every request and identify failure points quickly. Annotating traces and turning them into tests streamlines debugging and enhances overall system reliability.

OpenMark AI

Task Benchmarking

OpenMark AI allows users to benchmark various AI models against specific tasks they define. This feature simplifies the evaluation process by enabling users to describe their tasks in simple terms without needing coding skills or technical jargon.

Side-by-Side Comparisons

Utilizing real API calls, OpenMark AI offers side-by-side comparisons of different models. This feature ensures users see genuine performance metrics, allowing for a more accurate assessment of each model's capabilities based on real-time data.

Detailed Performance Metrics

Users can analyze key performance indicators such as cost per request, latency, and scored quality. This feature enables teams to quantify model performance and make data-driven decisions when selecting AI solutions for their projects.

Consistency Tracking

OpenMark AI tracks the stability of model outputs across repeated runs, providing insights into how consistently a model performs over time. This feature is crucial for ensuring reliability and predictability in AI-driven applications.

Use Cases

Agenta

Collaborative Prompt Development

Agenta is ideal for teams looking to enhance their prompt engineering process. With its centralized platform, developers, product managers, and domain experts can collaborate seamlessly, leading to better prompt designs and improved model performance.

Systematic Experimentation

Teams can use Agenta to conduct systematic experimentation, comparing various prompts and model outputs. This capability allows for rapid iterations and more effective testing, ultimately improving the quality of LLM applications.

Performance Monitoring

Agenta’s observability features enable teams to monitor the performance of their LLM applications in real-time. By tracing requests and identifying regressions, teams can ensure their systems remain reliable and effective.

Evidence-Based Decision Making

The platform empowers teams to make informed decisions based on systematic evaluations and feedback from domain experts. This evidence-based approach reduces the risk associated with deploying LLM applications and enhances overall team confidence.

OpenMark AI

Model Selection for Development

OpenMark AI is ideal for development teams looking to select the most suitable AI model for their applications. By benchmarking against specific tasks, teams can identify which models perform best under their unique requirements.

Cost Analysis for AI Implementations

Product managers can use OpenMark AI to conduct thorough cost analyses of different models. This helps them understand the financial implications of using various AI technologies and select options that offer the best balance of performance and cost.

Quality Assurance Testing

Quality assurance teams can leverage OpenMark AI to validate the outputs of chosen models. By running multiple tests and comparing results, they can ensure that the models consistently meet quality standards before deployment.

Research and Development Initiatives

Researchers exploring advanced AI capabilities can utilize OpenMark AI to benchmark emerging models. This enables them to assess new technologies' effectiveness and stability, supporting innovation and informed decision-making in AI research.

Overview

About Agenta

Agenta is an open-source LLMOps platform designed to address the core challenges faced by AI teams in building and deploying reliable large language model (LLM) applications. This platform serves as a centralized hub that fosters collaboration among developers, product managers, and subject matter experts, transitioning from chaotic and siloed workflows to structured, evidence-based processes. Agenta mitigates the unpredictable nature of LLMs by offering integrated tools throughout the entire development lifecycle. Teams can experiment with various prompts and models in a unified playground, run systematic evaluations—both automated and human—to validate changes, and monitor production systems with detailed tracing to quickly identify and debug issues. By providing a single source of truth and replacing guesswork with structured workflows, Agenta enables teams to iterate faster, deploy with confidence, and maintain the performance of their applications over time. Being model-agnostic and open-source, Agenta ensures flexibility and avoids vendor lock-in, making it a practical foundation for teams serious about operationalizing their LLM applications.

About OpenMark AI

OpenMark AI is an innovative web application designed specifically for task-level benchmarking of large language models (LLMs). It allows users to articulate their testing requirements in plain language, making the evaluation process accessible to those without extensive technical expertise. By enabling simultaneous testing of prompts across various models, OpenMark AI provides users with comprehensive insights into cost per request, latency, scored quality, and stability across multiple runs. This functionality is essential for developers and product teams who need to select or validate the most appropriate model before integrating AI features into their products. With hosted benchmarking that uses credits, users are relieved from the hassle of managing different API keys for OpenAI, Anthropic, or Google, streamlining the comparison process. OpenMark AI emphasizes real-world performance, showcasing actual API call results rather than relying on potentially misleading marketing metrics. This focus on cost efficiency allows users to make informed choices based on the quality of outputs relative to their expenses, ensuring they select the most effective model for their specific workflows. Free and paid plans are available, with detailed information provided in the in-app billing section.

Frequently Asked Questions

Agenta FAQ

What is LLMOps?

LLMOps refers to the operational practices and tools designed to manage the lifecycle of large language models. It encompasses prompt management, experimentation, evaluation, and deployment to ensure reliable application performance.

How does Agenta improve collaboration among team members?

Agenta fosters collaboration by centralizing workflows and providing a platform where product managers, developers, and subject matter experts can work together. This reduces silos and enhances communication, making it easier to share insights and feedback.

Can Agenta integrate with existing tools and frameworks?

Yes, Agenta is designed to be model-agnostic and integrates seamlessly with various frameworks and tools, including LangChain, LlamaIndex, and OpenAI. This flexibility allows teams to leverage their existing tech stacks without vendor lock-in.

Is Agenta really open-source?

Absolutely! Agenta is an open-source platform, allowing anyone to dive into the code, contribute to its development, and customize it according to their specific needs. This openness fosters a community of developers and encourages collaborative improvement.

OpenMark AI FAQ

How does OpenMark AI simplify the benchmarking process?

OpenMark AI simplifies benchmarking by allowing users to describe their tasks in plain language, eliminating the need for complex coding or technical setups. This makes it accessible for users of all skill levels.

What types of models can I benchmark using OpenMark AI?

OpenMark AI supports benchmarking a wide array of models from various providers, including OpenAI, Anthropic, and Google. This extensive catalog allows users to test over 100 models against their specific tasks.

Is OpenMark AI suitable for non-technical users?

Yes, OpenMark AI is designed to be user-friendly, enabling individuals without technical backgrounds to effectively benchmark AI models. The intuitive interface and plain language task descriptions facilitate ease of use.

Can I track performance consistency with OpenMark AI?

Absolutely. OpenMark AI offers features that track the consistency of model outputs across multiple runs, providing insights into how reliably a model performs over time, which is critical for applications requiring stable results.

Alternatives

Agenta Alternatives

Agenta is an open-source LLMOps platform that serves as a centralized hub for teams focused on building reliable AI applications. Designed to streamline the development lifecycle, it enables developers, product managers, and subject matter experts to collaborate effectively, reducing chaos and siloed workflows. Users often seek alternatives to Agenta for various reasons, including pricing, specific feature requirements, or compatibility with existing platforms and workflows. When evaluating alternatives, it's essential to consider factors such as the flexibility of the platform, support for integration, and whether it meets the unique needs of your team.

OpenMark AI Alternatives

OpenMark AI is a web-based application designed for benchmarking various large language models (LLMs) based on specific tasks. It allows developers and product teams to evaluate models by comparing metrics such as cost, speed, quality, and stability, making it easier to make informed decisions before deploying AI features. Users often seek alternatives to OpenMark AI for reasons such as pricing variations, specific feature sets, or integration capabilities that better meet their platform needs. When choosing an alternative, it is essential to consider factors such as the range of supported models, the accuracy and reliability of benchmarking results, ease of use, and any associated costs. Look for solutions that provide comprehensive insights into model performance and cost efficiency, ensuring they align with your development goals and workflow requirements.