Agenta vs Fallom

Side-by-side comparison to help you choose the right product.

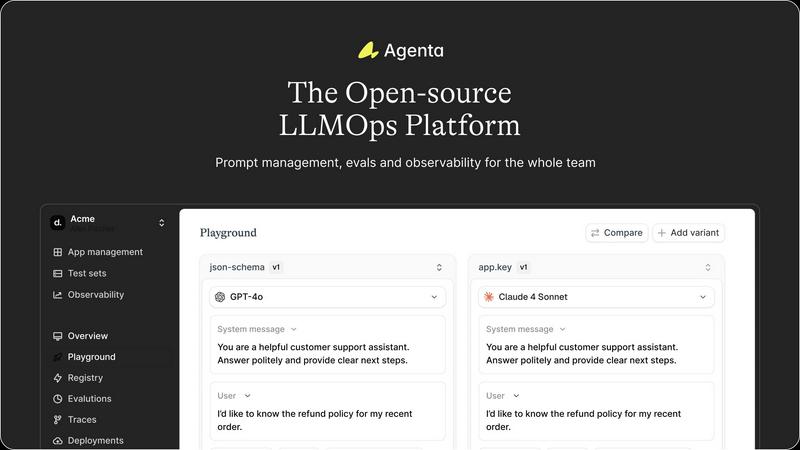

Agenta is an open-source LLMOps platform that centralizes collaboration, evaluation, and observability for reliable AI.

Last updated: March 1, 2026

Fallom provides real-time observability for LLMs, enhancing tracking, debugging, and cost management for AI operations.

Last updated: February 28, 2026

Visual Comparison

Agenta

Fallom

Feature Comparison

Agenta

Centralized Workflow Management

Agenta centralizes all aspects of LLM development, including prompt management, evaluations, and system traces, into one platform. This eliminates the chaos of scattered tools and enables teams to maintain a cohesive workflow.

Unified Playground

With Agenta’s unified playground, teams can experiment with different prompts and models side-by-side. This feature allows for easy comparisons and iterative development, facilitating faster and more informed decision-making.

Automated Evaluation System

The platform includes an automated evaluation system that enables teams to systematically run experiments and track results. This evidence-based approach replaces guesswork with data-driven insights, ensuring that every change is validated before deployment.

Integrated Observability Tools

Agenta provides integrated observability tools that allow teams to trace every request and identify failure points quickly. Annotating traces and turning them into tests streamlines debugging and enhances overall system reliability.

Fallom

Real-Time Observability

Fallom provides real-time observability for AI agents, allowing users to track every tool call, analyze timing, and debug with confidence. Users can view live traces of LLM interactions, making it easier to identify performance bottlenecks and efficiency issues.

Cost Attribution

With Fallom, organizations can track spending per model, user, and team, providing full cost transparency for budgeting and financial planning. This feature enables accurate chargeback mechanisms, helping organizations manage their AI-related expenditures effectively.

Compliance Ready

Fallom is designed with compliance in mind, offering complete audit trails to support various regulatory requirements, such as the EU AI Act, SOC 2, and GDPR. The platform includes features like input/output logging, model versioning, and user consent tracking to ensure adherence to compliance standards.

Session Tracking

The session tracking feature groups traces by session, user, or customer, providing complete context for every interaction. This capability allows teams to analyze user behavior and assess the impact of changes on specific user groups, enhancing the overall management of LLM operations.

Use Cases

Agenta

Collaborative Prompt Development

Agenta is ideal for teams looking to enhance their prompt engineering process. With its centralized platform, developers, product managers, and domain experts can collaborate seamlessly, leading to better prompt designs and improved model performance.

Systematic Experimentation

Teams can use Agenta to conduct systematic experimentation, comparing various prompts and model outputs. This capability allows for rapid iterations and more effective testing, ultimately improving the quality of LLM applications.

Performance Monitoring

Agenta’s observability features enable teams to monitor the performance of their LLM applications in real-time. By tracing requests and identifying regressions, teams can ensure their systems remain reliable and effective.

Evidence-Based Decision Making

The platform empowers teams to make informed decisions based on systematic evaluations and feedback from domain experts. This evidence-based approach reduces the risk associated with deploying LLM applications and enhances overall team confidence.

Fallom

Performance Monitoring

Organizations can use Fallom to monitor the performance of their LLMs in real-time. By analyzing latency and response times, teams can quickly identify and address performance issues before they affect end users.

Cost Management

Fallom enables teams to manage and allocate their AI spending effectively. By tracking costs associated with different models and user interactions, organizations can optimize their budgets and ensure that resources are being used efficiently.

Regulatory Compliance

For companies operating in regulated industries, Fallom provides the tools necessary to maintain compliance with various laws and standards. Its comprehensive audit trails and privacy controls help organizations navigate complex regulatory landscapes with confidence.

Debugging and Optimization

Fallom is essential for developers and data scientists looking to optimize their LLM deployments. With its detailed tracing and session tracking capabilities, teams can pinpoint issues, evaluate model outputs, and make data-driven adjustments to improve performance.

Overview

About Agenta

Agenta is an open-source LLMOps platform designed to address the core challenges faced by AI teams in building and deploying reliable large language model (LLM) applications. This platform serves as a centralized hub that fosters collaboration among developers, product managers, and subject matter experts, transitioning from chaotic and siloed workflows to structured, evidence-based processes. Agenta mitigates the unpredictable nature of LLMs by offering integrated tools throughout the entire development lifecycle. Teams can experiment with various prompts and models in a unified playground, run systematic evaluations—both automated and human—to validate changes, and monitor production systems with detailed tracing to quickly identify and debug issues. By providing a single source of truth and replacing guesswork with structured workflows, Agenta enables teams to iterate faster, deploy with confidence, and maintain the performance of their applications over time. Being model-agnostic and open-source, Agenta ensures flexibility and avoids vendor lock-in, making it a practical foundation for teams serious about operationalizing their LLM applications.

About Fallom

Fallom is an innovative AI-native observability platform tailored for monitoring and optimizing large language model (LLM) and agent workloads. Its design focuses on providing organizations with extensive visibility into every LLM call in real-time, enabling end-to-end tracing that includes essential metrics such as prompts, outputs, tool calls, tokens, latency, and cost associated with each interaction. This level of detail caters to developers, data scientists, and operational teams who need real-time insights to evaluate LLM performance and troubleshoot issues efficiently. With Fallom, enterprises can enhance compliance through features that support session, user, and customer-level context, timing waterfalls for multi-step agents, and comprehensive audit trails. These features include logging, model versioning, and consent tracking. By utilizing a single OpenTelemetry-native SDK, teams can set up monitoring in a matter of minutes, allowing them to live monitor usage, debug issues rapidly, and allocate spending accurately across models, users, and teams.

Frequently Asked Questions

Agenta FAQ

What is LLMOps?

LLMOps refers to the operational practices and tools designed to manage the lifecycle of large language models. It encompasses prompt management, experimentation, evaluation, and deployment to ensure reliable application performance.

How does Agenta improve collaboration among team members?

Agenta fosters collaboration by centralizing workflows and providing a platform where product managers, developers, and subject matter experts can work together. This reduces silos and enhances communication, making it easier to share insights and feedback.

Can Agenta integrate with existing tools and frameworks?

Yes, Agenta is designed to be model-agnostic and integrates seamlessly with various frameworks and tools, including LangChain, LlamaIndex, and OpenAI. This flexibility allows teams to leverage their existing tech stacks without vendor lock-in.

Is Agenta really open-source?

Absolutely! Agenta is an open-source platform, allowing anyone to dive into the code, contribute to its development, and customize it according to their specific needs. This openness fosters a community of developers and encourages collaborative improvement.

Fallom FAQ

What types of organizations can benefit from Fallom?

Fallom is designed for organizations that utilize large language models, including tech companies, financial institutions, healthcare providers, and any enterprise that relies on AI-driven interactions. Its versatile features cater to developers, data scientists, and operational teams.

How quickly can I set up Fallom?

Setting up Fallom is straightforward and can be accomplished in under five minutes using its OpenTelemetry-native SDK. This quick setup allows teams to start monitoring their LLMs and agents almost immediately.

Is Fallom compliant with data protection regulations?

Yes, Fallom is built with compliance in mind, featuring complete audit trails, logging capabilities, and consent tracking to meet regulatory requirements such as GDPR and the EU AI Act.

Can Fallom integrate with existing tools?

Fallom is designed to work seamlessly with all providers through its OpenTelemetry compatibility. This ensures that organizations can leverage their existing toolsets without being locked into a single vendor.

Alternatives

Agenta Alternatives

Agenta is an open-source LLMOps platform that serves as a centralized hub for teams focused on building reliable AI applications. Designed to streamline the development lifecycle, it enables developers, product managers, and subject matter experts to collaborate effectively, reducing chaos and siloed workflows. Users often seek alternatives to Agenta for various reasons, including pricing, specific feature requirements, or compatibility with existing platforms and workflows. When evaluating alternatives, it's essential to consider factors such as the flexibility of the platform, support for integration, and whether it meets the unique needs of your team.

Fallom Alternatives

Fallom is an AI-native observability platform that specializes in monitoring and optimizing large language model (LLM) and agent workloads. It provides organizations with essential visibility into every LLM call made in production, which is crucial for effective tracking, debugging, and cost management in AI applications. Users often seek alternatives to Fallom for various reasons, including pricing considerations, specific feature requirements, or the need for compatibility with their existing technology stack. When looking for an alternative, it's vital to assess key factors such as real-time observability capabilities, cost attribution features, compliance readiness, and session tracking functionalities to ensure the selected platform meets organizational needs. Choosing a suitable alternative to Fallom involves evaluating the specific requirements of your team and the unique challenges you face in monitoring LLMs. Look for solutions that offer comprehensive observability features, robust cost management tools, and the ability to seamlessly integrate with your current systems. Additionally, ensure the alternative you consider can support compliance with relevant regulations, providing peace of mind while managing AI workloads.